Why your tracking setup is missing conversions (and how to spot it)

Most marketing teams are losing conversion data right now. Not because of a broken tag, or a misconfigured event in Google Tag Manager, but because the way tracking works today is fundamentally unreliable. And the problem is this: you don’t see what’s missing. Campaigns keep running; reports keep populating; ROAS looks acceptable... until you compare it with reality.

This is what tracking signal loss looks like in practice. And it has a real cost that most performance teams have never calculated.

In this article you’ll learn how to identify signal loss in your tracking setup, calculate the real cost, and how Server-side Tracking fixes the ecosystem that’s letting conversions slip through.

Why your analytics tools don’t match your backend data

“With client-side solutions, one day you can measure 90% and the next day only 20%. You can’t send good data like that and you can’t train a machine either.” - Teun van Kleef, Lead Advertising & Data @ Social Brothers

Imagine: a performance team runs a campaign for an e-commerce brand. Google Ads reports 85 purchases. Meta reports 77. The backend (the actual Shopify order count) shows 143 purchases during the same period. Neither of these platforms are lying. They are simply only seeing the conversions that successfully made it through a fragile chain of browser execution, cookie acceptance, ad blocker filtering, and network transmission. The ones that did not make it through are invisible.

That gap between what your ad platform reports and what actually happened is your tracking resilience gap. And it is almost certainly wider than you think.

In our experience working with thousands of companies on Server-side Tracking implementations, we see data gaps of anywhere from 10% to 40% of real conversion volume going undetected by client-side tracking setups. The exact number depends on your industry, your audience demographics, the browsers your users prefer, and how aggressively they protect their privacy.

For a team spending €50,000 per month on paid media, a 25% signal loss does not just mean incomplete reports. It means the algorithm is optimizing on 75% of the truth. It means the budget is being allocated using a distorted picture of reality. It means campaigns that appear to underperform are being cut and campaigns that appear strong may only look that way because their conversions happen to survive the data loss better than others.

What is causing tracking signal loss?

Browser-level tracking restrictions

These restrictions have tightened significantly over the past 3 years. Safari's Intelligent Tracking Prevention caps first-party cookie lifetimes at 7 days, or even 24 hours in some cross-site scenarios (read about all the privacy updates in here). Firefox blocks many third-party trackers by default. Chrome is under ongoing regulatory pressure with its Privacy Sandbox changes.

Increasing ad blocker usage

Tools like uBlock Origin and Ghostery do not just block display ads: they actively prevent tracking pixels from firing. For audiences in technology, finance, or any sector where privacy awareness is high, blocker usage rates can exceed 30–40%.

Cross-device customer journeys

A user who discovers your product on a mobile phone, does research on a desktop, and converts through a tablet represents 3 separate device footprints. Client-side tracking, which relies on cookies stored in individual browsers on individual devices, cannot stitch this journey together reliably. You see three anonymous users where there is actually one customer.

None of these are new problems. But they are compounding. Each year, client-side tracking loses a little more ground. The teams that recognize this early and adapt their measurement infrastructure accordingly build a structural advantage over competitors who are still optimizing on increasingly incomplete signals.

What does tracking signal loss actually cost? A tangible example

The impact of unreliable tracking becomes concrete when you trace it through to actual business decisions.

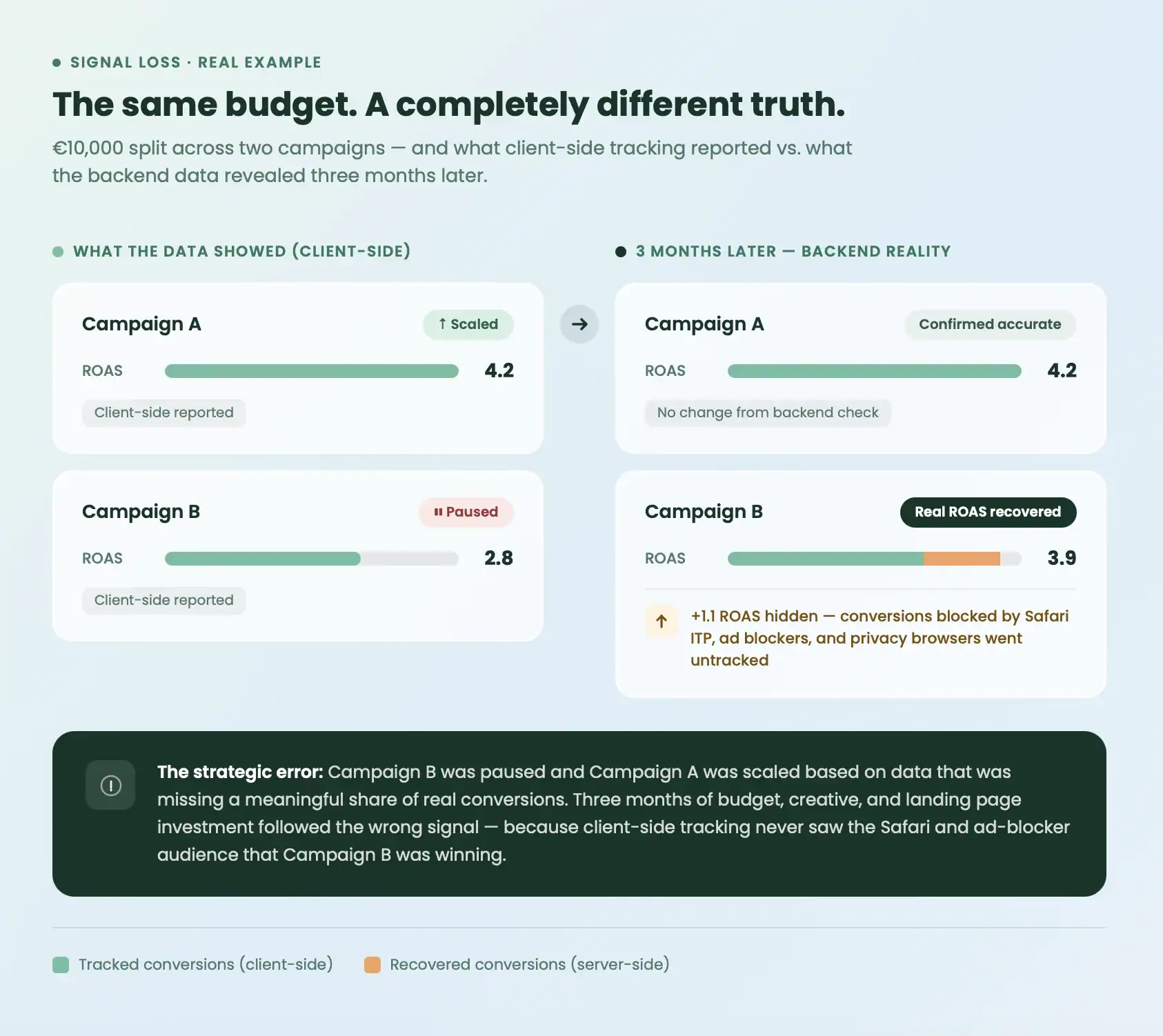

Consider a marketing team that invests €10,000 across two campaigns. Based on available conversion data, Campaign A appears to produce a ROAS of 4.2, and Campaign B appears to produce a ROAS of 2.8. The team scales Campaign A and pauses Campaign B.

Three months later, a deeper cross-reference of backend order data against marketing spend tells a different story. Campaign B was driving a segment of conversions that disproportionately failed to track on the client side: users on Safari with ITP active, users on privacy-focused browsers, users with ad blockers installed. When those conversions are attributed correctly, Campaign B's real ROAS was 3.9.

The consequence is not a reporting error. It is a strategic error. The budget was allocated in the wrong direction. Creative and landing page investment followed it. The team spent three months optimizing a campaign hierarchy that did not reflect reality.

Tracking signal loss does not just distort your data. It distorts your decisions.

Five symptoms your tracking has a resilience problem

- Persistent discrepancies between platforms and backend systems. If your CRM, e-commerce platform, or analytics backend consistently shows more conversions than your ad platforms report, the gap is your signal loss. A 5–10% discrepancy can reflect normal attribution modeling variation. Anything above that warrants investigation.

- Retargeting audiences that shrink despite stable traffic. If your website traffic holds steady but your remarketing pool contracts, cookies are being blocked or expiring before they can be used for audience matching. This is a direct data quality issue, not a reach problem.

- Campaign performance that fluctuates without a clear operational cause. When ROAS swings significantly week-to-week without corresponding changes in budget, creative, or audience, it often reflects instability in the signal the algorithm is receiving, not actual changes in campaign performance.

- Increasing reliance on modeled or estimated data. If Google's modeled conversion estimates or Meta's modeled attribution have become your primary performance indicators, rather than verified measured events, your measured signal is already too thin to rely on for optimization.

- High ad blocker rates in your core audience. If your audience skews toward tech-savvy, privacy-aware, or European users, your ad blocker exposure is likely above average. Every user whose blocking tool prevents your tracking pixel from firing is a conversion that disappears from your reports completely.

The structural solution: moving the measurement layer

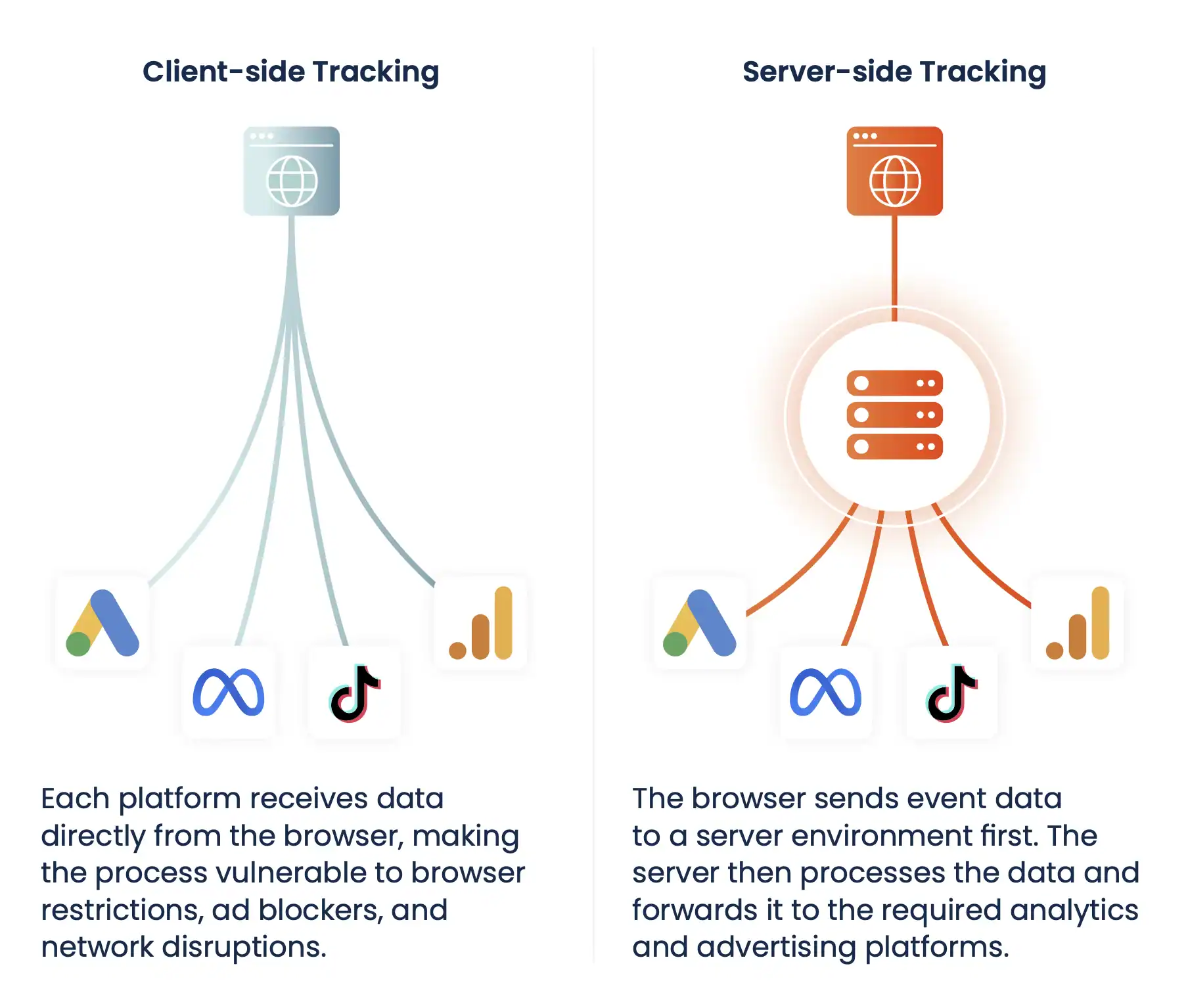

Client-side tracking fails because it depends on an environment (the user's browser) that you do not control. The browser decides whether to execute your tracking script, whether to send the data, and whether to accept or reject the cookies that make attribution possible.

Server-side Tracking changes the architecture. Instead of relying on the browser to both collect and transmit conversion data, your server becomes the collection point. The browser sends event data to a server endpoint that you control, hosted on your own subdomain, and from there, your server forwards clean, enriched event data to the platforms that need it.

This shift removes the browser from the transmission path. Ad blockers cannot intercept a request to your own subdomain. ITP cannot shorten a cookie that is set server-side as a first-party cookie. Script conflicts and page load failures cannot interrupt a server-to-server API call.

The result is that a meaningfully higher share of your actual conversions makes it into the data your platforms use for reporting and optimization. Platforms like Google Ads, Meta Ads, and GA4 all support server-side event ingestion, through Google's Enhanced Conversions, Meta's Conversion API (CAPI), and GA4's Measurement Protocol respectively. Setting these up through a server-side tracking infrastructure like TAGGRS means you can start recovering signal without rebuilding your entire tag management setup from scratch.

The real question: how resilient is your setup?

Most teams already suspect something is wrong. The real challenge now is understanding:

- how big the gap actually is

- where it comes from

- and what to do about it

That’s exactly what our guide below helps you answer.

Diagnose your tracking setup (free guide)

The guide How resilient is your tracking setup? walks you through:

- how to identify where signal loss happens

- how to assess your current tracking reliability

- what a realistic roadmap toward Server-side Tracking looks like

It’s not theory. It’s a structured diagnostic based on real implementations.