What is GEO and why Server-Side Tracking is key

This article explains what Generative Engine Optimisation (GEO) is, how it differs from classic SEO, why most websites have a blind spot right now, and what agencies and marketing teams can do about it.

Search is changing. For a long time, getting found online meant one main thing: ranking high on Google. But more and more people now get their answers directly from AI tools like ChatGPT, Perplexity or Google's AI Overviews, without even clicking a single link.

This shift changes the game. Anyone working in digital marketing or data analysis needs to understand what's happening and what you can actually do about it.

What GEO actually means

Generative Engine Optimisation, or GEO for short, means preparing content so AI-powered search systems (like ChatGPT, Perplexity or Google's AI Overviews) find it, understand it, and cite it as a source.

Classic SEO helps you rank in a list of links. GEO helps you get mentioned in an AI-generated answer.

That's a real difference. If someone asks Perplexity "How do I track conversions without cookies?", they get a ready-made answer, not ten blue links. This answer comes from sources the system considers reliable and relevant. GEO is the work that ensures your content is part of that.

Where GEO and SEO differ

SEO and GEO share a common base: good content, clear structure, a trustworthy website. But there are real differences.

| SEO | GEO | |

|---|---|---|

| Goal | Rank high in SERPs | Be cited by AI |

| Success metrics | Clicks, rankings, impressions | Mentions, citations, brand presence |

| What matters most | Keywords, backlinks, page speed | Authority, clarity, depth |

| Measurement | GA4, Search Console | GA4 via server-side tracking, brand monitoring |

The biggest difference: With SEO, someone still has to click your link. With GEO, the AI system reads your content, summarizes it, and gives the user a direct answer. Your brand might get mentioned, but a click isn't guaranteed.

That makes measurement harder. And that's exactly where most companies are in the dark right now.

Why AI systems visit your website

Tools like ChatGPT and Perplexity don't invent their answers from nothing. They're trained on huge amounts of web content, and many also crawl the web in real-time for current information.

When they visit your site, they send an automated visitor called a bot. This bot reads your page content just like a human, only much faster and without executing JavaScript. Why that's so important comes next.

Known AI crawlers include, for example:

- GPTBot and ChatGPT-User (OpenAI)

- PerplexityBot (Perplexity)

- ClaudeBot (Anthropic)

- Google-Extended (Google's AI products)

- Meta-ExternalAgent (Meta)

Each of these bots sends a recognizable signal, the so-called User-Agent. That's a short string that identifies who's visiting your site. With the right setup, you can recognize these visits and analyze which pages catch the interest of AI systems.

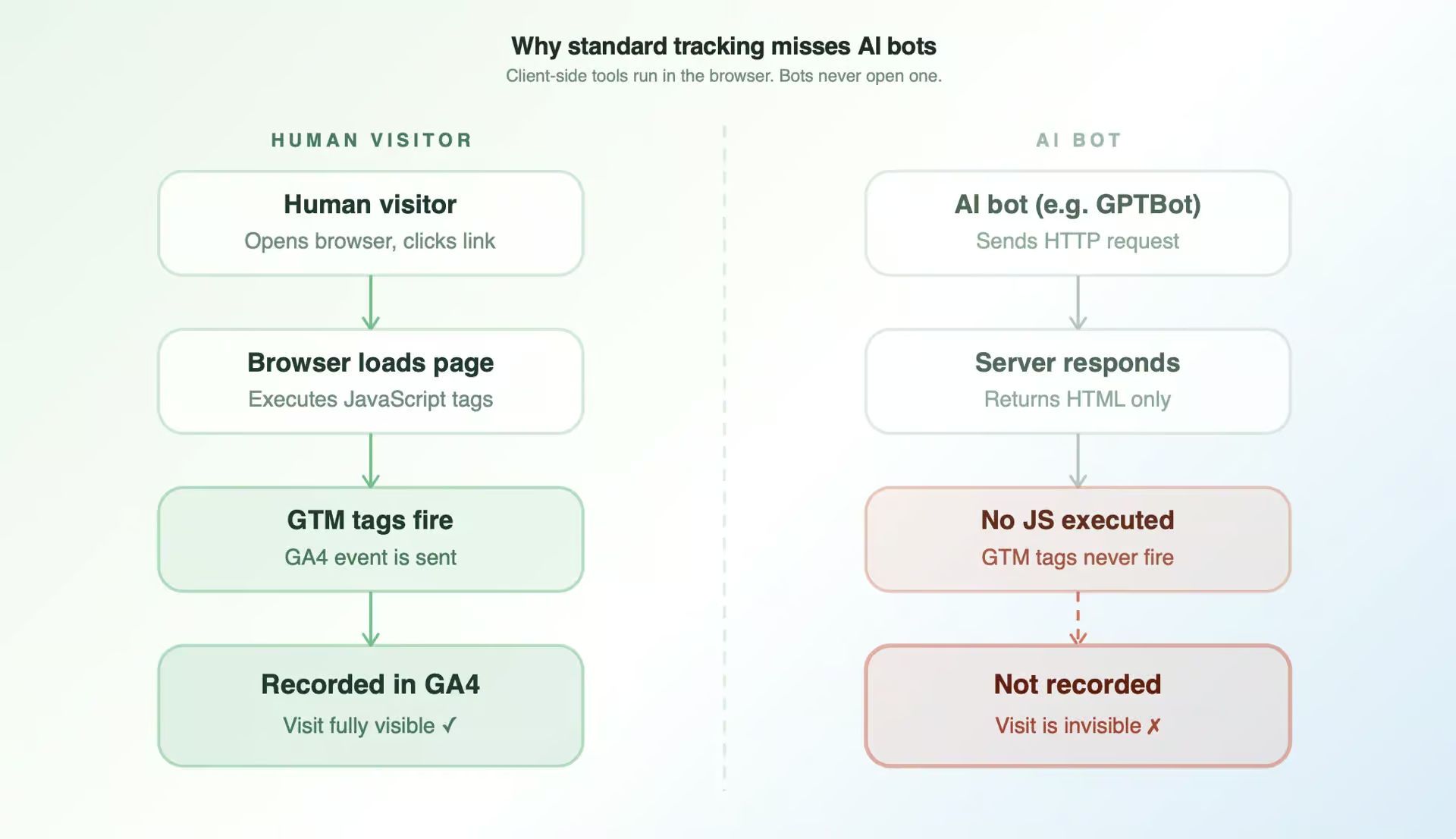

Why traditional tracking doesn't see any of this

This is especially relevant for everyone working with data and tracking. Most websites use client-side tracking. That's tools like Google Tag Manager or GA4 (Google's current analytics solution) that run in the user's browser. That works great for human visitors. But AI bots don't behave like humans. They don't open a browser, they don't execute JavaScript, and they don't trigger tracking tags.

The result? Every time an AI bot visits your site, nothing lands in your analytics tool. The visit simply doesn't exist.

That means most companies don't know:

- How often AI systems crawl their content

- Which pages LLM bots (LLM stands for Large Language Model, the tech behind tools like ChatGPT) are particularly interested in

- Whether their content gets read before it's cited anywhere

That's not a small measurement gap. That's a blind spot right where GEO begins.

Why clean first-party data is the key

Before we get to the solution, a quick step back is worth it.

First-party data is data you collect directly from your own website via your own setup, without detours through third parties. It's considered the most reliable data source because no one else filters, blocks, or changes it.

For GEO, clean first-party data is crucial for one simple reason: You can only optimize what you can measure. If you don't know which pages AI systems visit, you can't make informed decisions about which content to improve or expand.

The problem with bad or incomplete data is that it often doesn't show up right away.

Here are a few examples of how it looks in practice:

Example 1: the page no one seems to know

You've published a detailed article on server-side tracking for e-commerce. It ranks okay in Google, but it doesn't appear in any AI answers. With client-side tracking, you only see human visitors. What you don't see: PerplexityBot crawls the page regularly, but the content is too generic to be cited. Without server-side tracking, you're completely missing this clue.

Example 2: the blind spot in bot traffic

GPTBot visits your homepage three times a week. Your most important product pages, on the other hand, are barely crawled. With server-side tracking, you'd see this pattern and understand that your internal linking isn't guiding AI bots to where your real value lies.

Example 3: no signal, no optimization

Without data on LLM bot visits, you optimize by gut feeling. You write articles because you think they're relevant, not because you see that AI systems are actively seeking those topics.

In all three cases, the problem isn't the content itself. It's the missing data foundation.

How Server-side Tracking solves It

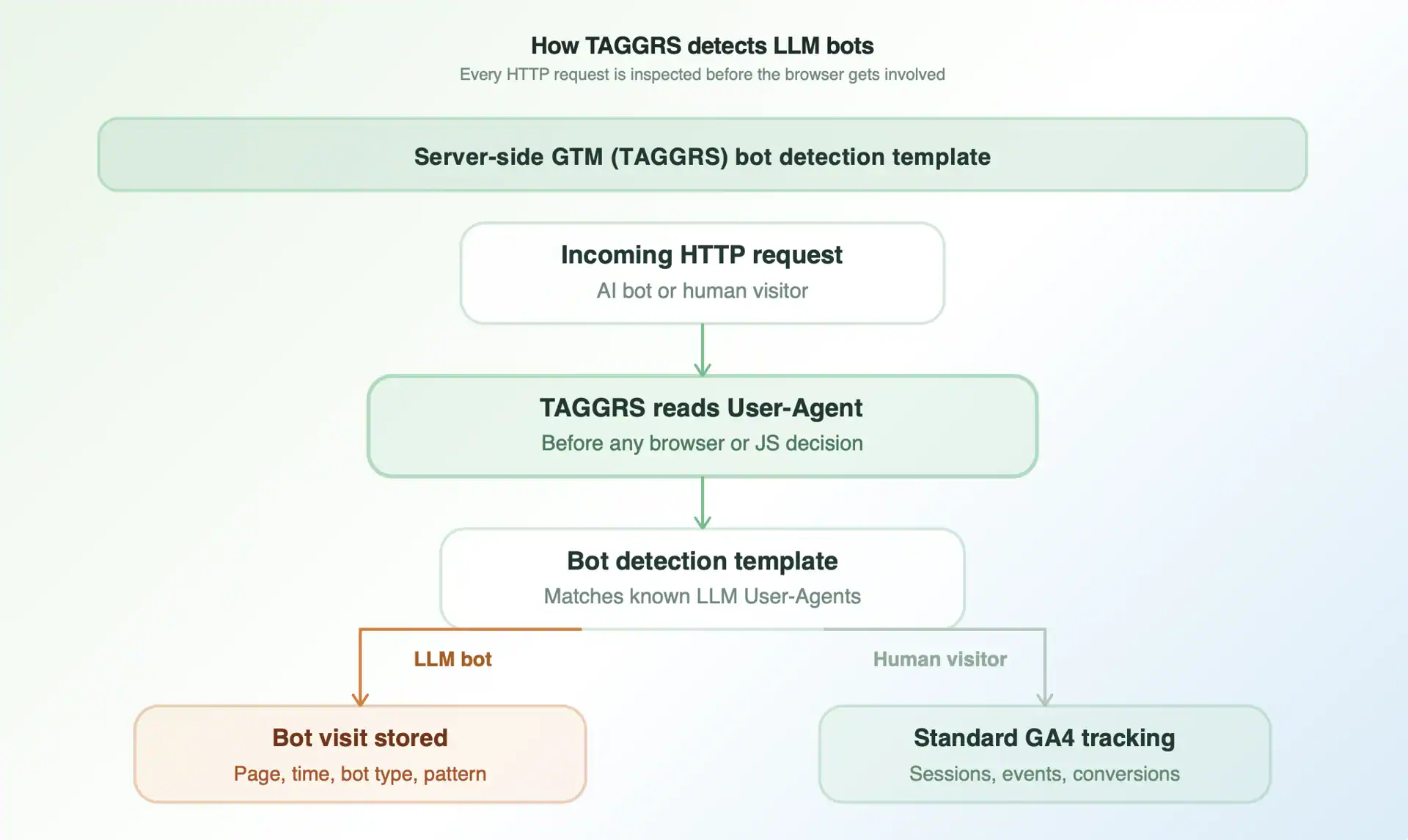

Server-side Tracking sits one level deeper than client-side tracking. Requests arrive at your server before any browser or bot decides what to execute.

That means: Every crawl, every bot visit, every HTTP request leaves a trace. You can read the User-Agent, identify the bot, and save the visit with full context. For example, with our TAGGRS Bot Detection Tool.

We've built a GTM template for this. It checks the User-Agent at the server level, matches it against a list of known LLM bots, and gives you a clear signal back: Was there a known LLM bot here just now? On which page? When?

It's available on GitHub:

With this data, you can, for example:

- See which pages are crawled most frequently by which AI systems

- Recognize patterns in which content types attract particularly high bot traffic

- Compare which crawled pages actually get cited in AI answers and which don't

- Base content decisions on real data instead of assumptions

What makes good GEO content

AI systems recognize useful content surprisingly well. They cite sources that:

- Give a clear, direct answer to a specific question

- Are structured (headings, bullet points, short paragraphs)

- Take a clear stance or share specific knowledge instead of just summarizing what others have said

- Cover a topic in depth and address multiple aspects

That last point especially gets underestimated. A 300-word article that just scratches the surface has much less chance of being cited than a detailed article that fully explains a concept, names common mistakes, and references related topics.

That's why generic, thin content is losing so badly right now. If an AI system finds five better explanations on the same topic, yours drops out.

GEO doesn't replace SEO. The basics still apply: a fast, well-structured website, clearly crawlable content, strong internal linking between related pages, and trusted backlinks from relevant sources. But GEO adds a new layer. You're no longer optimizing just for a ranking algorithm, but for a system that reads your content and decides whether it's relevant enough and your domain has enough trust to be cited in a direct answer.

What this means for agencies

If you work at an agency or are responsible for tracking and data for clients, you'll notice: GEO is becoming a real topic in client conversations. The question "Do we actually show up when people use ChatGPT?" comes up more and more often. And most agencies don't have an answer yet.

That's a gap you can close. And the entry point is more concrete than many think.

With server-side tracking as the foundation, you can offer clients:

- Visibility: Show which pages are crawled by which AI systems, with real data instead of guesses

- Diagnosis: Identify where content is crawled but not cited, and what that says about content quality

- Strategy: Give content recommendations based on bot data, not gut feeling

- Reporting: Make GEO performance measurable, even if there aren't ready-made dashboards for it yet

That's also a strong selling point. GEO is new enough that most competitors aren't offering it yet. Whoever can show clients first how their website performs with AI systems has a real advantage in conversations.

Server-side Tracking isn't just the technical foundation. It's what turns GEO from speculation into a discipline you can actually manage. And TAGGRS templates make exactly this setup faster and more scalable, without every agency having to build everything from scratch.